What Is AI-Enabled Coding?

AI-enabled coding is a new interview format where the goal is not simply to hand-write a clean solution from scratch. You are given an editor with an AI chat on the side, closer to how many engineers work today with tools like Cursor or Copilot Chat. The key difference is that the interview is still evaluating your technical judgment. The AI is a tool, not the candidate.

If you are searching for an AI enabled coding interview, this is the format people mean: an interview where you can use AI, but you are still responsible for the algorithm, correctness, tradeoffs, and final code quality.

In practice, this means you need to operate more like a tech lead than a pure coder. The important skill is not "can the model write code?" It is "can you understand the problem, guide the model well, inspect the output, catch mistakes, and drive the code to the correct behavior?" The point is not to offload the work to AI. It is to use AI while still owning the technical judgment.

Which Companies Use This Format?

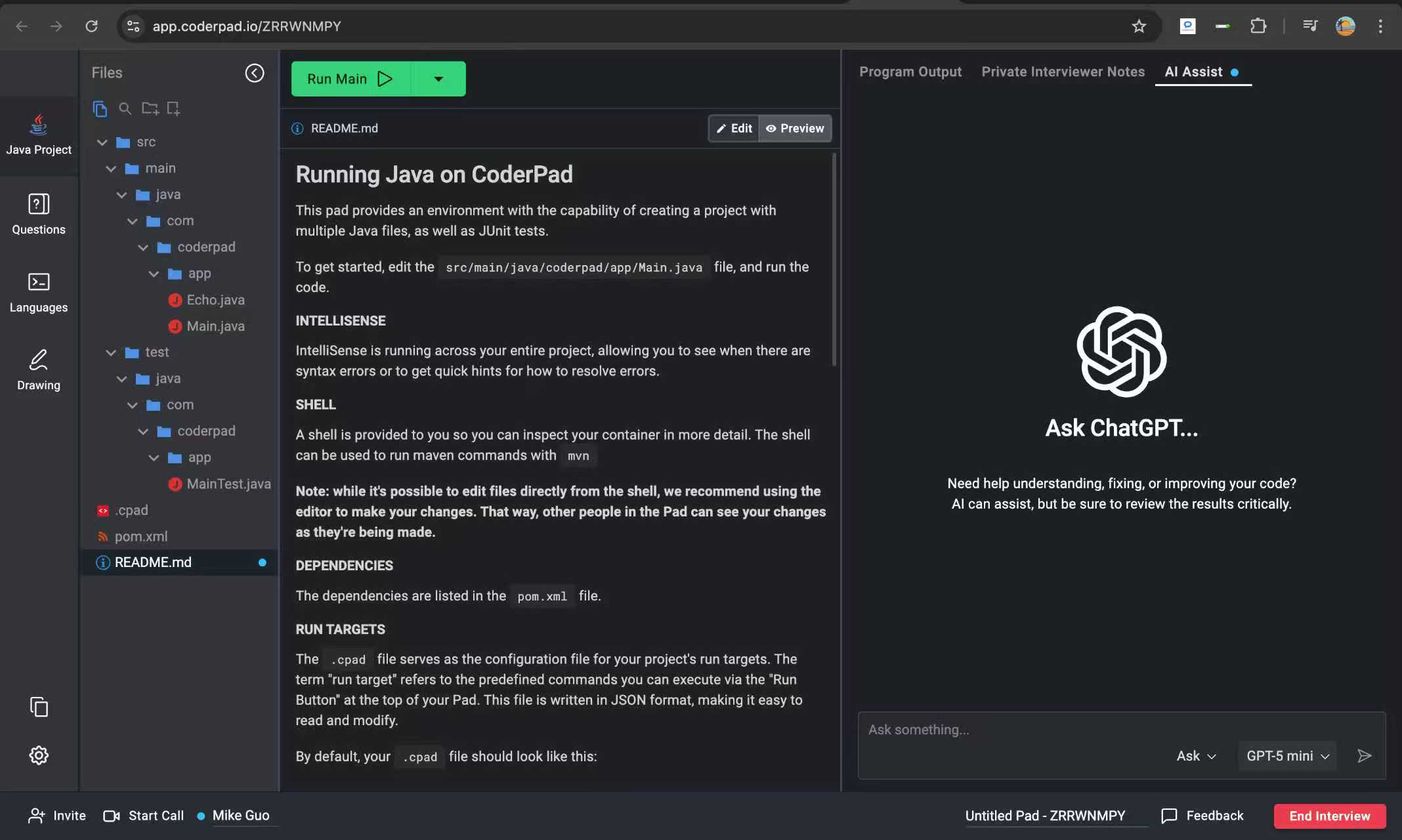

Meta is the most visible adopter. Their AI-enabled coding round launched in late 2025 as part of the onsite loop, using a CoderPad environment with a built-in AI chat panel (discussion). But Meta is not the only company running this format.

Canva replaced its CS fundamentals screening round with an AI-assisted coding competency. Candidates are expected to use tools like Copilot or Cursor, and interviewers stop after each AI generation to ask what the code does. Blind acceptance of AI output is treated as a red flag (Canva engineering blog).

Shopify takes a different approach entirely: candidates work in their own IDE on their own machine over screen share, with whatever AI tools they prefer. There is no sandboxed environment. The evaluation focuses on design instincts, architecture, and whether the candidate is directing the AI rather than being directed by it (discussion).

LinkedIn runs an AI-enabled round using CoderPad with an AI chat panel, where the AI cannot directly modify code and candidates must copy responses into the editor manually. Rippling also allows AI tools during coding rounds but uses a different scoring rubric depending on whether candidates opt in (discussion).

The trend is broad. CoderPad reports that over 35,000 AI-assisted interviews have been run through their platform, with roughly 20–30% of customers enabling AI features. In practice, candidates should prepare for both classic coding rounds and AI-enabled rounds, because the AI-enabled format tests a different mix of skills: codebase reading, test interpretation, bug fixing, and judgment about how to use AI without over-relying on it.

Meta AI Enabled Coding Interview

The Meta AI enabled coding interview is the most prominent company-specific version of this format right now, which is why many candidates use Meta as shorthand for the broader shift toward AI-enabled coding interviews. The environment is typically a CoderPad-style editor with an AI chat panel, but the interview is still grading your judgment, not the model's.

In practice, Meta seems to be testing whether you can break down an unfamiliar problem, steer the AI with precise constraints, catch weak generations, and keep the solution aligned with tests and follow-up requirements. That makes this different from classic Meta prep, where many candidates optimize mainly for writing a clean solution from scratch under time pressure.

- Expect the AI to help with drafting, but not to own the reasoning.

- Expect follow-ups to go beyond baseline correctness into optimization, hidden rules, and implementation judgment.

- Expect interviewer attention on how you review and direct AI output, not just whether you can produce code quickly.

The Interview Environment

The environment usually includes a code editor plus an AI chat panel. The model can be strong, such as GPT-5.3, or it can be smaller and noticeably less capable, like GPT-4o mini, Claude 3.7 Haiku, or Llama 4. You should not assume the AI is perfect. You also should not assume it has a rich full-agent workflow like Cursor applying edits directly across the project. In many cases it behaves more like a chatbot that gives you code, explanations, and suggestions that you still need to inspect and apply yourself.

You are also usually given a codebase instead of a single file. That means you need to understand project structure, tests, helper files, and existing abstractions. The interview often starts with failing tests or hidden bugs already in the repo. You may need to find a logic bug, preserve an invariant, infer an unstated rule from test cases, and then add additional features in later follow-up parts.

The important practical implication is that the AI may be available without being fully reliable. Sometimes the model is strong, and sometimes it behaves more like a lightweight chat assistant than a powerful coding partner. You should prepare for an environment where AI helps with drafting and syntax, but not one where it reliably solves the interview for you.

- You are working in a real project layout, not a blank single-file editor.

- Tests are part of the contract, and failing cases often reveal missing rules.

- There may already be bugs in the codebase before you start writing anything.

- The AI is closer to a chat assistant than a full project-editing agent.

- Different models may be available, and smaller models can be less useful on harder parts.

Format

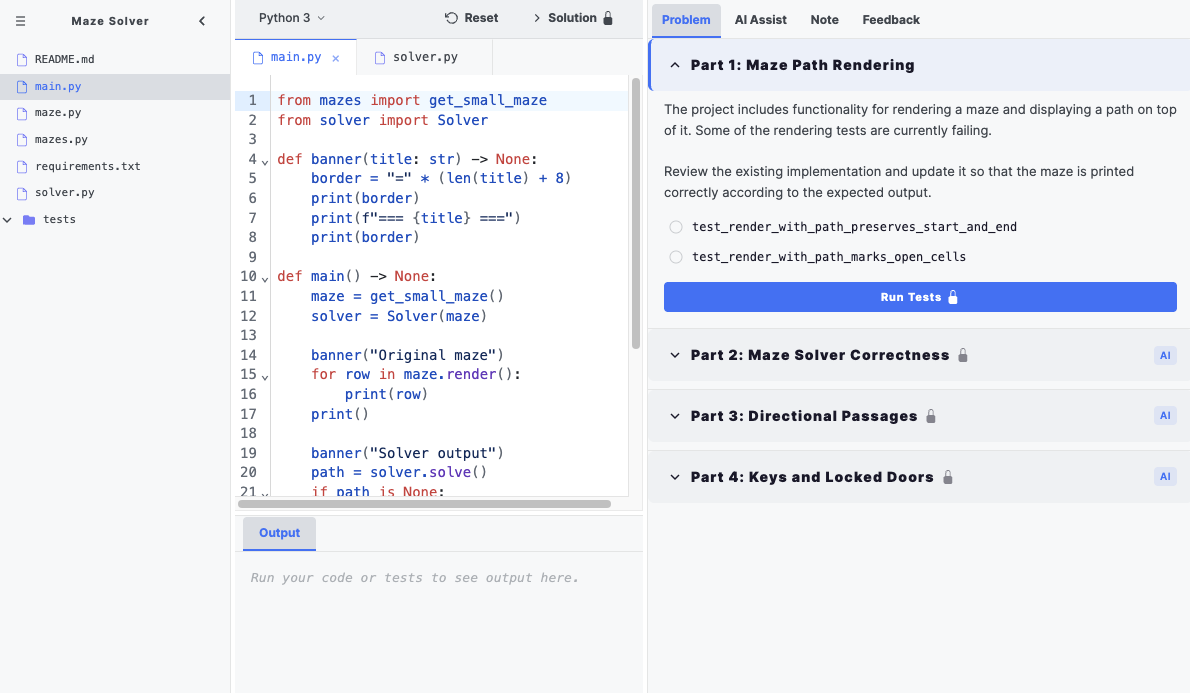

The format is progressive. Early parts may restrict AI usage, especially for basic debugging or reasoning. Later parts usually unlock AI, but the problems become harder and more stateful. Because syntax matters less than in classic interviews, the format can spend more time on follow-up questions and deeper changes to the same problem.

In practice, these interviews are usually structured as a multipart problem rather than a single prompt with one final answer. Our own AI-enabled coding problems use four parts for that reason. The earlier parts tend to focus on orientation, debugging, and understanding the rules of the codebase.

Middle parts tend to shift into core implementation. You may need to add a missing behavior, build the main algorithm, or make an existing system pass a broader set of tests. This is where AI assistance can be useful, but only if you already know the direction and can review the output carefully.

Later parts usually deepen the same problem with additional constraints, performance requirements, new edge cases, or extra features. That is why AI-enabled coding can feel harder than expected: lower syntax pressure creates more room for follow-ups, optimization, and engineering judgment.

What Companies Test in AI-Enabled Coding Interviews

The interview is testing more than whether you can chat with an LLM. It is testing whether you can decompose the problem, give the AI the right constraints, and then act responsibly on the output.

- Can you understand the codebase and the failing tests quickly?

- Can you prompt the AI with the right requirements instead of vague requests?

- Can you inspect the output and decide what is usable versus wrong?

- Can you recognize when the AI is hallucinating based on general training data instead of the actual repo?

- Can you reason about complexity, state, and edge cases without outsourcing the thinking?

- Can you notice when the AI is following a generic pattern but the repository overrides that behavior?

A common example is when the AI uses a general cost model or "standard" interpretation from training data, but the code or tests in the repo override that behavior. You still need to catch that mismatch yourself.

Question Categories

The problems discussed so far fit a few recurring patterns. Recognizing the category quickly helps you choose the right approach and review the AI output more effectively.

Simulation

Example: card game strategy. These questions test state management, simulation correctness, and strategy evaluation. The core skill is keeping state transitions consistent across repeated updates, such as making sure cards removed from the board are actually removed everywhere the state depends on them.

Algorithm

Examples: maze solver and max unique characters. These questions often start with a simple BFS, DFS, or backtracking baseline and then add state explosions: keys, doors, directionality, bombs, pruning, bitmask tracking, or memoization. These problems can require you to move beyond a basic baseline and toward pruning, memoization, or bitmask state compression.

Reverse Engineering

Example: compiler optimization. In this kind of problem you may need to inspect source, grep configs, or infer hidden parameters from examples instead of relying on a generic answer.

Takeaways

The Algorithmic Bar Can Still Be High

One of the biggest surprises in AI-enabled coding interviews is that the algorithmic bar can actually go up. Since the interview spends less time on syntax, the follow-up depth can be higher. Patterns like DP with bitmask, aggressive pruning, reverse engineering of hidden rules, and multi-stage state tracking still matter in this format.

Classic Meta Prep Assumptions Do Not Always Transfer

This matters because candidates sometimes prepare with the wrong assumption: "Meta almost never asks DP, so I probably do not need it." In classic interviews that may often be true, but in AI-enabled coding, if the scalable solution for a later part really is DP with bitmask, you are still responsible for recognizing that and guiding the solution toward it.

Less Syntax Pressure Creates More Room for Follow-Ups

In classic Meta coding prep, people often optimize heavily for clean syntax and standard medium-level algorithms. In AI-enabled coding, syntax pressure is lower, so the interview can spend more time on follow-ups, deeper optimizations, and harder variants.

How To Prepare

The core of preparing for AI-enabled coding interviews is not getting the AI to write the code for you. It is learning to review, guide, and improve the AI's output like a technical lead. That is the right mental model. You should treat the AI as a tool you supervise, not as the driver of the solution.

Build Pattern Recognition First

Start by building muscle memory around the recurring templates. For search and state-compression questions like maze or maximum unique characters, ask yourself immediately: do I need bitmask state, pruning, BFS, DFS, or memoization? For simulation questions like card game, think about state consistency and whether the board is being updated correctly. For black-box reverse-engineering questions like compiler optimization, prefer answers grounded in the repo over "common sense" guesses.

- Try AlgoMonster’s AI-enabled coding practice problems and treat them like real interview loops.

- Learn the patterns well enough to know what algorithm to use before you ask AI for code.

- Use the speedrun feature to strengthen fast pattern recognition and algorithm selection.

- Practice debugging from tests and source, not just from problem statements.

- Keep your implementation knowledge sharp, because debugging questions still require knowing what correct code should look like.

Use an Anti-AI Checklist

It also helps to keep a simple anti-AI checklist in your head before you accept any generated code: does the environment support these imports, is the model guessing a cost or reading it from the repo, will this complexity blow up on the larger tests, and did it handle deduplication or preprocessing correctly? If not, revise the plan before you paste anything in.

- Environment check: are the libraries, APIs, and language features actually available here?

- Common-sense check: did the AI read this rule from the repo, or is it guessing from training data?

- Complexity check: will this still work when larger simulation or optimization tests are introduced?

- Preprocessing check: did it handle deduplication, anagrams, caching, or pruning where needed?

- State check: does every update preserve invariants across repeated operations?

Practice Human-AI Collaboration Language

Finally, practice the collaboration language itself. A good interview response sounds like: "The AI generated a naive version that passes the small cases, but the search space is still exponential. I want to steer it toward bitmask plus memoization." Or: "The AI is probably guessing the operator cost here. I’m going to inspect the codebase or infer the real weights from the tests instead of letting it continue hallucinating." That is the level of control companies are looking for.

Practice How AI Fails

Do not only practice ideal AI sessions where the model gets everything right. A better mock is to intentionally give AI incomplete context, watch how it goes wrong, and practice correcting it. That is much closer to the real interview. You need experience noticing when the model is overconfident, underspecified, or blindly following the wrong abstraction.

FAQ

What is an AI enabled coding interview?

An AI enabled coding interview is a technical interview where you solve coding tasks with access to an AI assistant, but you are still evaluated on problem solving, debugging, algorithm choice, and technical judgment.

How does the Meta AI enabled coding interview work?

The Meta AI enabled coding interview typically uses a CoderPad-style editor with an AI chat panel. You are still expected to drive the solution, review the model's output critically, explain tradeoffs, and adapt to follow-up requirements instead of treating AI as an autopilot.

Which companies use AI-enabled coding interviews?

Meta, Canva, Shopify, LinkedIn, and Rippling all run some form of AI-enabled coding interview. The format varies — Meta and LinkedIn use CoderPad with an AI chat panel, Shopify lets candidates use their own IDE, and Canva expects AI tool usage during screening rounds.

What makes AI-enabled coding different from a normal LeetCode question?

You are not solving from scratch in isolation. You need to inspect an existing project, understand tests, fix bugs, extend behavior, and validate AI-generated code against real constraints.

How should I use AI during practice?

Use AI to get syntax right, speed up boilerplate, and clarify parts of the problem or codebase you do not understand yet. If you give it enough context, it can sometimes solve substantial parts of the problem, but you should not assume that will always happen. Interview AIs are sometimes intentionally weaker than the best coding tools, so you still need to review the output carefully, run tests, and verify that the code actually matches the repo and the requirements.

Ready to Practice?

Try these AI-enabled coding problems and practice the format used at Meta, Shopify, Canva, and LinkedIn.